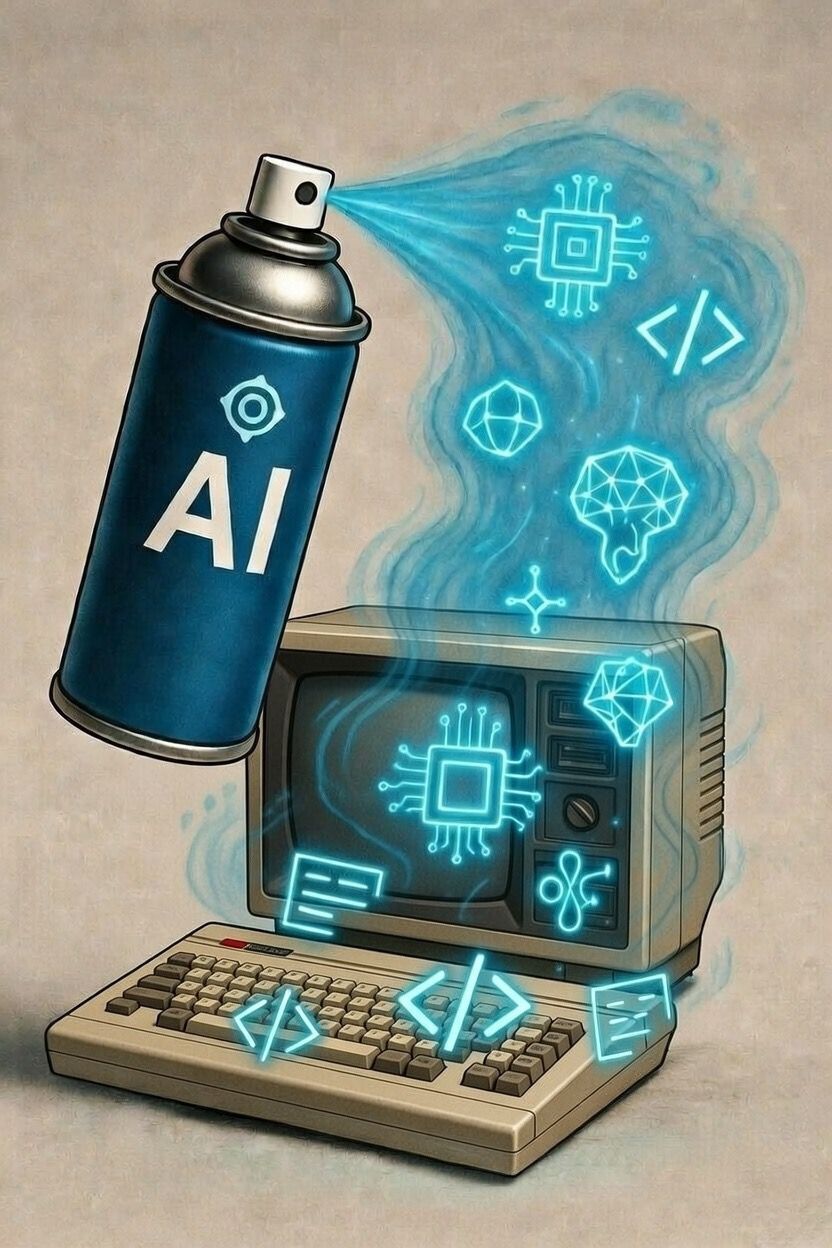

AI Spray: Why Spraying AI on Broken Systems Doesn't Make Them Better

AI has become the universal answer to business problems. Does the service not convert? Let's add an AI chatbot. Is the process inefficient? We automate it with an agent. Is the data chaotic? A model will order them.

The implicit assumption is always the same: If we add AI, the service improves. It's a reassuring, simple idea, easy to sell to boards and teams, but it's also profoundly wrong.

A mistake that has already been made with the design

This logic is not new. For years, hundreds of organizations have “sprayed design” on structurally poor products and services, expecting that a visual restyling or some superficial intervention would solve underlying problems. It didn't work then. It won't work even with AI.

AI isn't a magic wand, it's a technology that amplifies what it finds. And if what it finds has basic errors and is confusing, it will amplify that.

From Design Spray to AI Spray

The deadline Spray design was popularized by John Maeda, designer and technologist who has observed how companies abuse design, applying it as a cosmetic, a surface layer on software and services that remain structurally inadequate underneath. A more modern interface is added, a restyling is done, but the processes and the underlying logic remain unchanged.

Today the same mistake is being replicated with AI, only in this case the impact is more profound, the costs higher and the consequences harder to manage.

What does AI spray mean

Let's call it AI spray: using AI as a surface layer, without redesigning the underlying service. It is the idea that it is enough to insert a large language model or an AI agent to transform a mediocre experience into something innovative. It doesn't work like that.

Examples are everywhere. Chatbots inserted above poorly designed support processes that amplify confusion instead of resolving it. AI agents integrated into workflows that have never been formalized that act on erroneous assumptions and produce unpredictable outputs. Automations built on inconsistent data that scale the inconsistency at industrial speed.

The underlying problem is always the same: AI doesn't solve design problems, it brings them to the surface, often in ways we didn't expect.

Garbage In, Garbage Out (AI version)

There is a principle in machine learning today that is more current than ever: Garbage In, Garbage Out. If you feed a model dirty, incomplete, uncontextualized data, the model does not correct them, processes them, builds on them, returns outputs that seem plausible but are fundamentally wrong.

The myth of the powerful model

The common mistake is to think that a more powerful LLM compensates for low quality inputs. It's not like that. Even the most advanced model applied to poor data eventually produces more confident, more articulated results, but just as wrong. And that's worse, because it's harder to notice the mistake.

AI can't wipe out all problems, Scale them. It takes what is broken (a process, a data source, an ambiguous business logic) and replicates it, accelerates it, distributes it on a large scale. The isolated error becomes systematic, the manageable friction becomes an operating block.

AI doesn't fix garbage, it just makes it faster, more complex and more expensive.

This is the direct consequence of the AI spray. Instead of solving problems, we amplify them. And when this happens in agency systems, where AI does not just respond but acts, the consequences are further amplified.

Why do AI agents really fail

AI agents often fail. Enterprise implementations are abandoned, pilot projects don't scale. Every time that happens, we hear that 'the model was not powerful enough', 'the technology is not ready yet'.

The truth is that The problem is not the model, it's the service where that model was inserted.

The real systemic problems that lead to failure

AI agents fail because they are integrated into services not designed to accommodate them. Never formalized processes, workflows where responsibilities are ambiguous, improvised integrations between systems that don't speak the same language, total absence of adoption metrics, observability, and clear success criteria.

An AI agent needs structure, that is, to know When it can act, on which data, with what constraints, when it must stop. If this structure does not exist, the agent cannot compensate, it simply reflects the chaos and amplifies it.

The problem is not Which AI, but What service do you put it in. Until we accept this premise, we will see AI projects that promise innovation, but only bring frustration or other problems.

Why is AI spray dangerous

The AI spray is not only ineffective, It's counterproductive. And its consequences are concrete, measurable, and manifest quickly.

- Increase complexity without increasing value.

Every level of AI added to an already fragile system introduces new breaking points, new dependencies, new sources of error. The system becomes harder to maintain, more expensive to evolve, less predictable. - It hides problems instead of solving them.

AI may give the illusion of functioning, but underneath the surface the structural problems remain intact and when they emerge they do so in a more serious way. - It leads to a loss of trust in AI.

When an AI agent doesn't work as promised, the user doesn't think 'the service was poorly designed', he thinks 'the AI doesn't work'. This damages the credibility of all subsequent AI initiatives.

THEAI spray it's not a minor strategic mistake. It is a choice that compromises the ability to innovate, because it consumes resources, burns credibility and builds on already shaky foundations.

The alternative: designing the service before AI

The right approach doesn't start with AI, Start from the service. Before choosing which model to use, you need verify that the service has a solid foundation:

- clear and formalized processes;

- defined and documented flows;

- assigned and verifiable responsibilities;

- reliable, contextualized and quality data.

If these bases do not exist, AI will not solve anything, on the contrary, it will worsen the situation by amplifying any structural fragility.

Service Design as a foundation

AI agents must be designed as components of the service, applying the established principles of Service Design: map real user journeys to understand where the agent can actually add value, identify critical touchpoints and precisely define how the agent integrates into existing workflows.

But that's not enough. It is necessary to build observability metrics that make the agent's behavior transparent and allow us to understand what works and what needs to be corrected. It is necessary to implement continuous improvement cycles based on feedback from users, operational data and daily interactions.

The problem of spray AI is also one of poorly managed complexity. Technology after technology is added, hoping that it will solve structural problems, multiplying layers without creating value. John Maeda It states that “the simplest way to achieve simplicity is through thoughtful reduction” And that “simplicity is about subtracting the obvious, and adding the meaningful.” This also applies to AI systems. We need to consciously reduce the temptation to “spray” technology on every problem, and focus on what matters: well-structured processes, quality data, thoughtful integration, continuous observability.

This isn't red tape, It's the only way to make AI really work.

AI doesn't save poorly designed services

AI doesn't automatically transform a bad service into a good, innovative one. On the contrary, without design, AI is not innovation, it's just accelerated garbage. For this reason, the right question is not “How do we use AI?” , but “What process is robust enough to be powered by artificial intelligence?”. If the answer is' nobody ', then we need to work first on the structure, then on the technology.

AI produces results when it is integrated into a system designed to accommodate it, not when sprayed on existing problems. In the same way, agents are successful when they are part of a well-structured service, not when they are an attempt to mask systemic fragilities. In both cases, innovation comes from the project, not from the superficial application of advanced tools.

As long as we treat AI as a shortcut, we will build systems that are doomed to fail. And we will continue to wonder why artificial intelligence 'doesn't work', when the real problem is that maybe we didn't design anything that would actually work.

We also recommend